Author:Wall Street CN

Microsoft released a series of announcements yesterday.Three self-developed AI models: MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2.Cover eachSpeech transcription, speech generation, and image generation are three high-frequency application scenarios.

Some foreign media commentators have remarked that this move signifies Microsoft's intention to build its own AI technology system.Reduce reliance on OpenAI.

According to the official blog, MAI-Transcribe-1 offers faster batch transcription speeds than existing Microsoft Azure Fast products.2.5 times, the lowest average word error rate in the FLEURS benchmark test;MAI-Voice-1 can then only require60 seconds of audio can be generated in 1 second.MAI-Image-2's image generation speed has been improved by at least [percentage missing].2 times.

In our tests, the three models performed differently: the MAI-Transcribe-1 transcribed accurately at 1x speed, but its playback at 2x speed was poor.Rooftop confrontation scene from Infernal Affairshour,The statement "I also went to police academy, you undercover agents are really interesting" was mistakenly interpreted as "I also went to Cambridge, you studying accounting is really interesting.";faceCold WarFaster-paced, more emotionally intense arguments, even including...No response at allThe phenomenon of "downtime".

MAI-Voice-1 can generate voices with very different styles:British styleThe version is somber and rhythmic, presentingShakespearean stage presence;AmericanThe version is light and bright, with even details includingThe sound of saliva when a person talksThe realism is strong. MAI-Image-2 performs well in rendering natural landscapes in the official examples, but in actual tests...There are still limitations when dealing with complex instructions..

Speech transcription test: Chinese results had no punctuation.Transform the double-speed Infernal Affairs standoff into a "Cambridge Accounting" story.

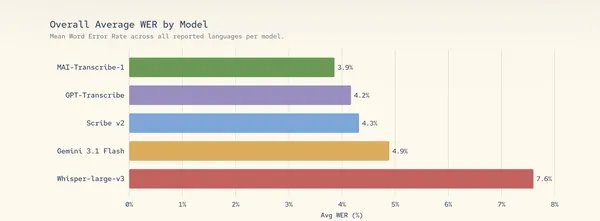

MAI-Transcribe-1 is a speech-to-text model that Microsoft claims achieved the lowest average word error rate in the FLEURS benchmark for the 25 most commonly used languages in Microsoft products.

In addition, Microsoft also stated that the model performs well in these languages.Whisper-large-v3 is superior to OpenAI's.And in most other benchmark languagesOutperforming Google's Gemini 3.1 FlashMicrosoft states that its batch transcription speed is significantly faster than existing Azure products. Transcription via the Foundry platform starts at $0.36 per hour.

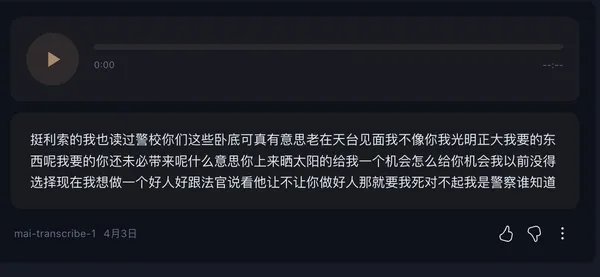

In our test, we selected the classic rooftop confrontation scene between Andy Lau and Tony Leung from the movie "Infernal Affairs" and entered MAI-Transcribe-1 at 1x and 2x speed respectively.

▲The famous rooftop confrontation scene from the movie "Infernal Affairs"

In the 1x speed playback test, this tool performed adequately:The entire rooftop dialogue was transcribed with zero errors, but the only drawback was that the output text had no punctuation or sentence breaks, making it read more like a long, uninterrupted stream of text, lacking the rhythm of the dialogue in the original film.

▲MAI-Transcribe-1 normal speed speech transcription results

In other words, it already possesses the ability to "hear accurately," butAt least for Mandarin Chinese, a truly "readily usable" subtitle experience still requires post-production manual editing to improve upon.

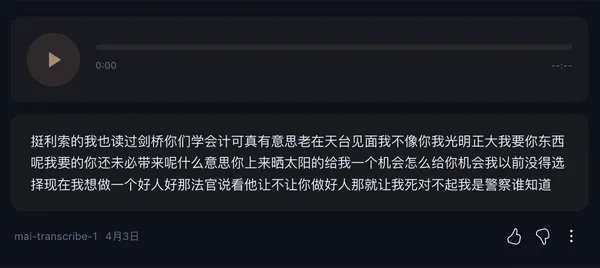

Then, when we turned the playback speed up to double, a dramatic scene unfolded.

▲ Results of MAI-Transcribe-1 double-speed speech transcription

The original sentence, "I also went to police academy, you undercover agents are really interesting," was actually "magically modified" to "I also went to Cambridge, you studying accounting is really interesting."

The transformation from "police academy" to "Cambridge" and from "undercover agent" to "accountant" represents a complete shift in meaning and even a reconstruction of the scene's context.

Finally, we further intensified our testing.A fast-paced and emotionally intense classic argument scene from the movie "Cold War" was played. As a result, MAI-Transcribe-1 almost "crashed" on the spot, failing to produce any effective transcription output, and its stability significantly decreased.

▲Miracle of the MAI-Transcribe-1 transcription of the famous scene of the cold arguing

After a round of testing, it's clear that MAI-Transcribe-1 maintains generally good transcription accuracy at normal speaking speeds. However, its shortcomings become glaringly apparent when faced with complex speech scenarios such as accelerated playback or heated arguments. Significant room for improvement is needed in areas such as accurately distinguishing words with similar pronunciations, judging semantic coherence at faster speaking speeds, and recognizing and adapting highly emotional speech.

Speech generation test: It can simulate the sound of saliva when speaking.

According to Microsoft's official blog, MAI-Voice-1 is a high-efficiency speech generation model that can generate one minute of audio in one second on a single GPU. This model is able to maintain speaker identity in long passages. Microsoft sells this technology through its Foundry platform at $22 per million characters.

Given that it currently only supports English, in the practical test, we selected Shakespeare's classic poem Sonnet 18 as the test text, input it into MAI-Voice-1, and generated "Shakespearean-style British accent version" and "joyful American accent version" respectively, in order to observe the differences in emotion modeling and speech detail control.

The results show a clear divergence between the two styles. The Shakespearean British English version has a slower overall pace, a significantly longer audio duration, a deeper tone, and more pauses and breaths between sentences and words, creating a rhythm similar to stage recitation.MAI-Voice-1's control over pauses and emphasis gives the voice a certain emotional tension, closely resembling the natural state of a human reciting.

In contrast, the American English version with a joyful tone is more upbeat and flowing, with a faster speaking speed, a rising intonation, and a brighter overall sound.At a detailed level, physiological noises such as "saliva sounds" can be perceived. These details indicate that the model is trying to simulate a more realistic sound environment, including microscopic features such as oral cavity moisture and airflow friction.

Image generation: capable of conveying a sense of spatial depth.

MAI-Image-2 is now available to the mass market through the Foundry platform, along with two other models. It ranks third on Arena.ai's text-to-image leaderboard, behind only Google's Nano Banana 2 and OpenAI's GPT-Image 1.5.

Microsoft has priced it at $5 per million tokens for text input and $33 per million tokens for image output. The company has begun rolling out the model across products such as Copilot, Bing Image Creator, and PowerPoint. WPP, one of the world's largest advertising companies, is among the first enterprise partners to use the model on a large scale.

▲Official MAI-Image-2 output (Official description: "The image presents a realistic, cinematic landscape: a narrow ridge winds through a deep canyon, flanked by towering cliffs covered in lush moss and soft vegetation. A narrow dirt path winds its way up the ridge to the summit, where two short hikers stand with their backs to the camera, emphasizing the vastness and solitude of the canyon. Thick fog permeates the canyon, creating a sense of depth and soft gradation. The diffused, overcast light is soft and natural, without harsh shadows. The textures of the moist moss, subtle moisture, and organic matter are extremely fine, with colors dominated by deep green and earth tones, presenting a soft, natural palette. Shot with a slightly elevated telephoto lens, compressing the depth of field, the composition is dramatic, creating a sense of vastness and tranquil solitude, reminiscent of National Geographic, 8K resolution, light fog, and a three-dimensional fog effect. The aspect ratio is 1:1.")

As can be seen from the official sample images, the textures of the moss and rock walls are clear, the transition between layers of fog and light and shadow is natural, and the color expression is soft and harmonious, without the common problems of "oversaturation" or "distortion" of traditional textural models.In terms of telephoto perspective and depth of field processing, the model can replicate the spatial sense of real photography and has a sense of depth.

However, in actual testing, we also found that the model has limited compatibility with instruction complexity. It performs stably when dealing with simple generation requirements with a single element, but once the input contains complex instructions with multiple elements and multiple scenarios, the system will directly indicate that it cannot generate the data.

▲The effect generated by MAI-Image-2 with simple commands (the command is "Alone in the vast universe, surrounded only by occasional tiny dust particles, emphasizing the maximum sense of loneliness, the overall image has a soft cool tone and a cinematic feel.")

▲The effect generated by MAI-Image-2 complex commands (The command is: "In the vast, boundless center of outer space, Ryan Gosling, wearing an exquisite star-themed spacesuit, floats slowly in complete weightlessness. His body rotates gently, his limbs extend naturally, as if gently embraced by the universe. The helmet visor reflects starlight and distant twinkling lights, his expression calm yet contemplative. There is nothing around—no buildings, no interference—only occasional floating particles and tiny cosmic dust, enhancing the realism and tranquil sense of movement in space. The composition emphasizes the ultimate loneliness of humanity, perfectly blending science fiction aesthetics with cinematic lighting. The overall style is bright and hyper-realistic, the image is as smooth as film, and the light is soft yet austere.")

Conclusion: Does Microsoft want to reduce its reliance on OpenAI?

The three models, MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2, are now available through Microsoft Foundry and MAI Playground. This marks the first time Microsoft has offered its self-developed models across multiple modalities to commercial users. All three models were independently developed by Microsoft's AI Superintelligence team, led by Mustafa Suleyman.

Microsoft also retains deep contractual access to OpenAI until 2032. The Foundry platform provides access to OpenAI's GPT models, Anthropic's Claude models, and Microsoft's own MAI series models through the same API.

Microsoft has been a distribution partner of OpenAI technology for many years. Today, it is working to build a competitive range of AI models while hosting OpenAI models, Anthropic models, and a growing library of open-source alternatives.

For executives evaluating their AI self-development strategy, the question is no longer whether Microsoft should rely on OpenAI, but rather how quickly Microsoft can close the performance gap with its own models and whether the economic benefits of internal R&D are sufficient to support the investment.

This article is sourced from: