Author:Wall Street CN

Anthropic announced a project today: Project Glasswing. The reason for launching this project is that Anthropic has trained a brand new super-powerful model, Claude Mythos Preview, which is actually the model mentioned in the CC source code leak a few days ago.

The project was jointly launched by 12 organizations, including Amazon AWS, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorgan Chase, the Linux Foundation, Microsoft, Nvidia, Palo Alto Networks, and Anthropic itself.

In layman's terms, this model is so powerful that it requires a secure testing mode, allowing only approved organizations to use it internally and not releasing it to the public. How powerful is it? Just look at the data; its code and reasoning capabilities far surpass Opus 4.6.

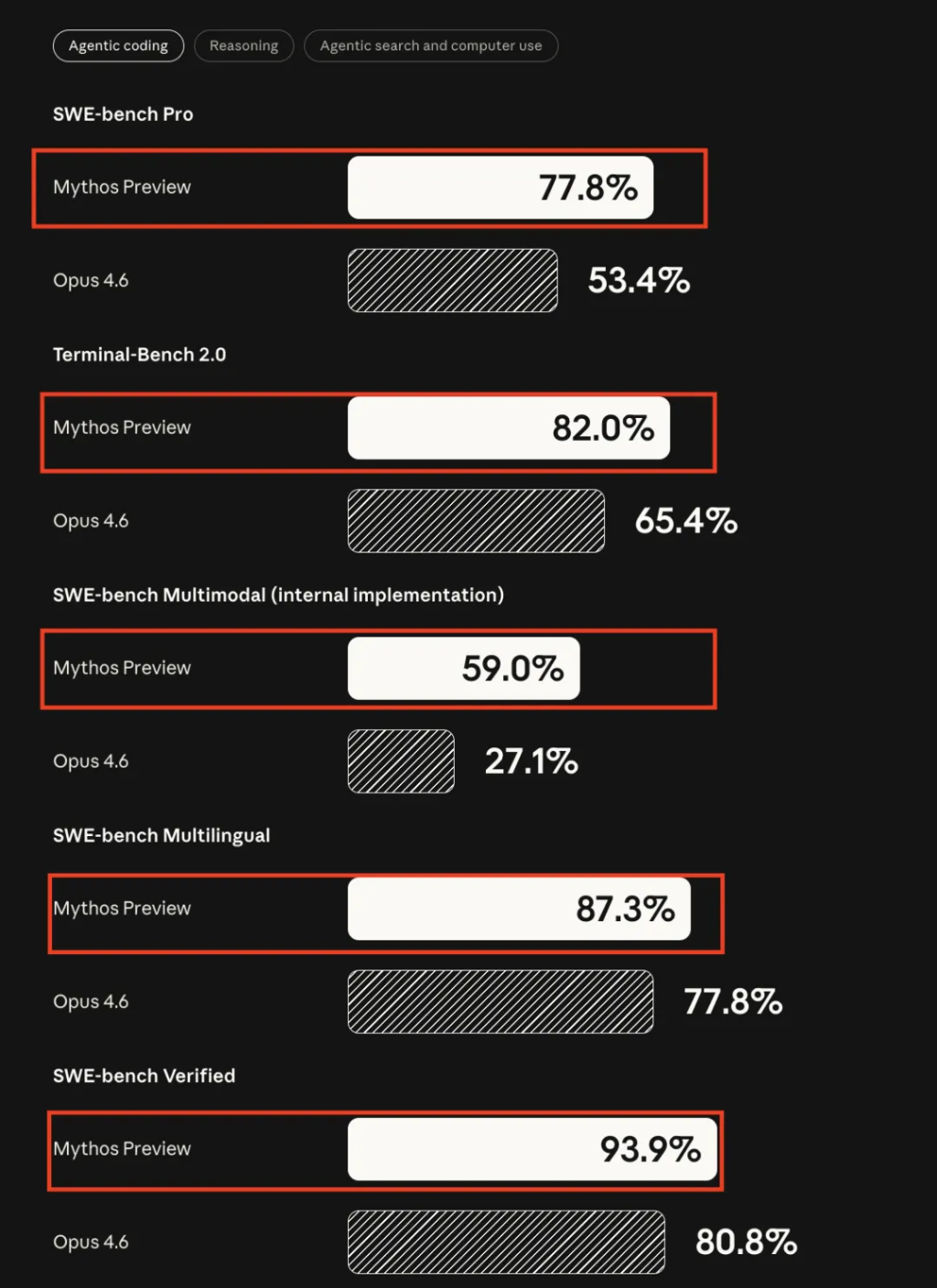

Code:

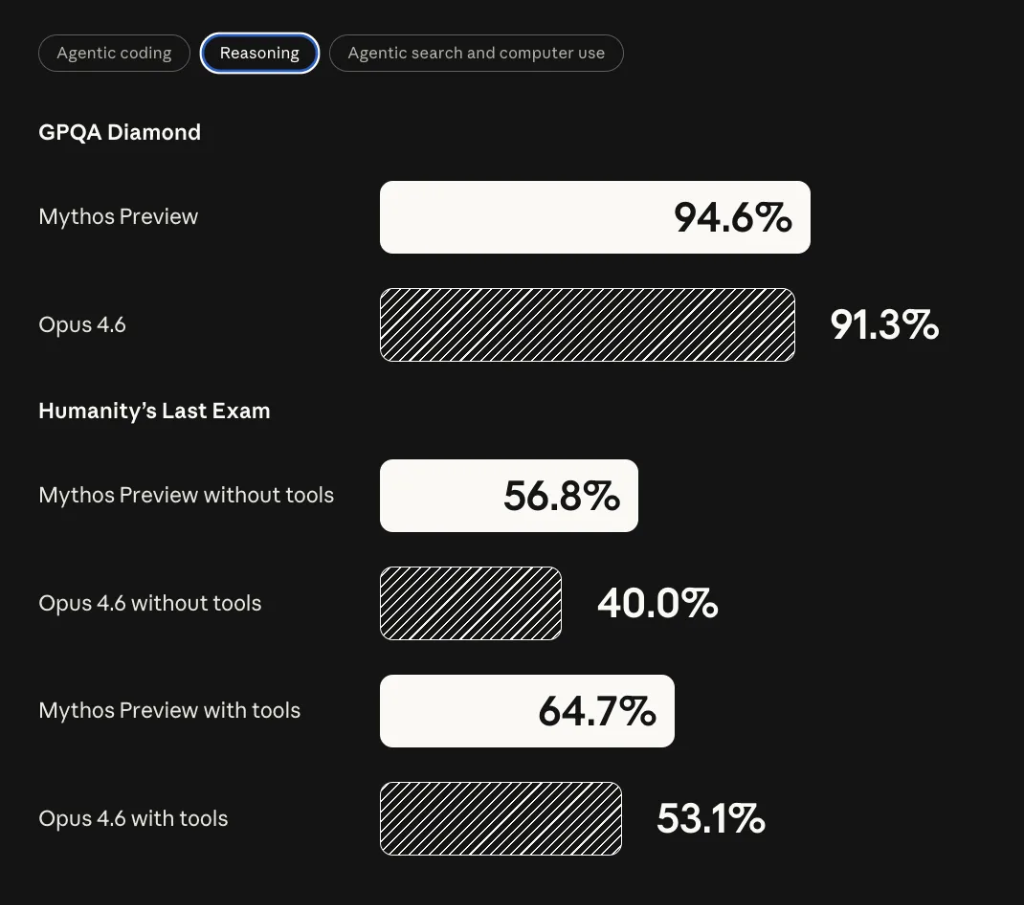

reasoning:

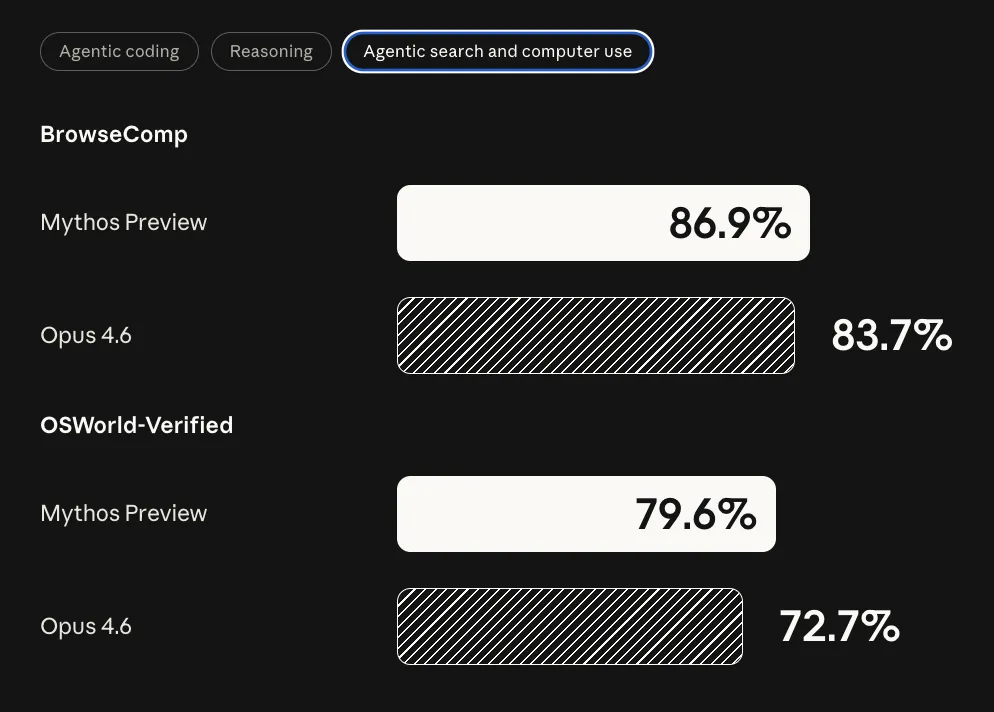

Search and Computer Use

Opus literally means masterpiece, and Mythos literally means myth. The CEO of Anthropic and a host of big names from its partners have come out to endorse this project.

Anthropic has explicitly stated that it does not intend to make Claude Mythos Preview publicly available. However, its long-term goal is to enable users to safely use models with equivalent capabilities. To this end, they plan to first develop and validate relevant security mechanisms on the upcoming Claude Opus model, iterating under controlled risk conditions, and then gradually expanding the rollout. A new version of Opus may be released soon to provide the corresponding capabilities.

Let's take a closer look at what Project Glasswing actually is.

What did this model discover?

Over the past few weeks, Anthropic has used Claude Mythos Preview to scan the world’s major operating systems, browsers and other important software.

Results: Thousands of previously undiscovered zero-day vulnerabilities were discovered, many of which were classified as high-risk.

Several specific examples:

A vulnerability in OpenBSD that has existed for 27 years. OpenBSD is known for its security and is used to run critical infrastructure such as firewalls. This vulnerability allows attackers to remotely crash a target machine simply by connecting to it.

A vulnerability in FFmpeg that has existed for 16 years. FFmpeg is used for video encoding and decoding in countless software programs. The line of code that the model found the vulnerability had been scanned 5 million times by automated testing tools before it was ever discovered.

In the Linux kernel, the model autonomously discovered and linked multiple vulnerabilities, enabling attackers to escalate from ordinary user privileges to complete control of the entire machine.

All of the above vulnerabilities have been reported to the relevant software maintainers and have now been fixed. For the remaining vulnerabilities, Anthropic has released the encrypted hash values; further details will be released after the vulnerabilities are fixed.

Why do this?

Anthropic's assessment is that AI models have surpassed everyone except a few top human experts in their ability to discover and exploit software vulnerabilities.

The spread of this capability is a matter of time, not a question of whether it will happen.

Global cybercrime causes an estimated $500 billion in economic losses annually. Attacks targeting healthcare systems, energy infrastructure, and government agencies have caused substantial damage and pose a continued threat to civilian and military infrastructure.

AI has significantly reduced the cost, barriers to entry, and level of expertise required to launch such attacks.

Anthropic's logic is: rather than waiting for others to use this capability offensively first, it's better to proactively use it defensively.

How exactly should the plan be implemented?

Project Glasswing currently comprises two layers.

The first tier consists of 12 founding partners who will gain access to Claude Mythos Preview to scan and patch vulnerabilities in their core systems, with a focus on areas such as local vulnerability detection, binary black-box testing, endpoint security, and penetration testing.

The second tier consists of over 40 other organizations that build or maintain critical software infrastructure, who will also gain access to the model to scan their own and open-source systems.

Anthropic has committed to providing up to $100 million in model usage credits. After the research preview period, Claude Mythos Preview will offer commercial access to participants, priced at $25/$125 per million input/output tokens, with access via the Claude API, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry.

In addition, Anthropic donated $2.5 million through the Linux Foundation to Alpha-Omega and OpenSSF, and $1.5 million to the Apache Software Foundation, totaling $4 million, to support open-source software maintainers in addressing this new situation. Open-source software maintainers can apply for access through the Claude for Open Source project.

Next steps

Regarding information sharing, partners will share information and best practices to the greatest extent possible. Anthropic has committed to publicly releasing a research progress report within 90 days, including the number of vulnerabilities discovered, issues fixed, and improvements that can be disclosed.

In terms of policy recommendations, Anthropic will collaborate with major security organizations to develop practical recommendations in the following areas: vulnerability disclosure processes, software update processes, open source and supply chain security, secure software development lifecycle, regulated industry standards, scaling and automation of vulnerability classification, and patch automation.

This article is sourced from: